Build a Compiler from Scratch, Part 1.2: Intermediate Representation and Code Generation

The frontend part of our compiler is complete, and we can parse the source code of a pylite program into an AST. This leaves us with a final task: translating the program described by the AST into assembly code. Technically, we could generate assembly code directly from the AST, but this poses a few problems, in particular:

- the structure of the AST is dictated by the syntax of the source language, and does not lend itself especially well to the tasks we need to perform before generating the assembly code, such as control-flow analysis, optimization, and generally breaking-up high-level operations into sequences of assembly instructions

- if we want to extend our compiler to support more CPU architectures, we would need to duplicate the entire code generation logic for each architecture, thus hurting the maintainability of the codebase

For these reasons, we'll split the process of generating assembly code into multiple steps. First, we'll translate the AST into an intermediate representation (IR) that is more suitable for optimization and code generation. Then, we'll perform optimization passes on the IR, and finally we'll generate assembly code from the optimized IR. The process of translating the AST into the IR is often referred to as "lowering" the AST into the IR.

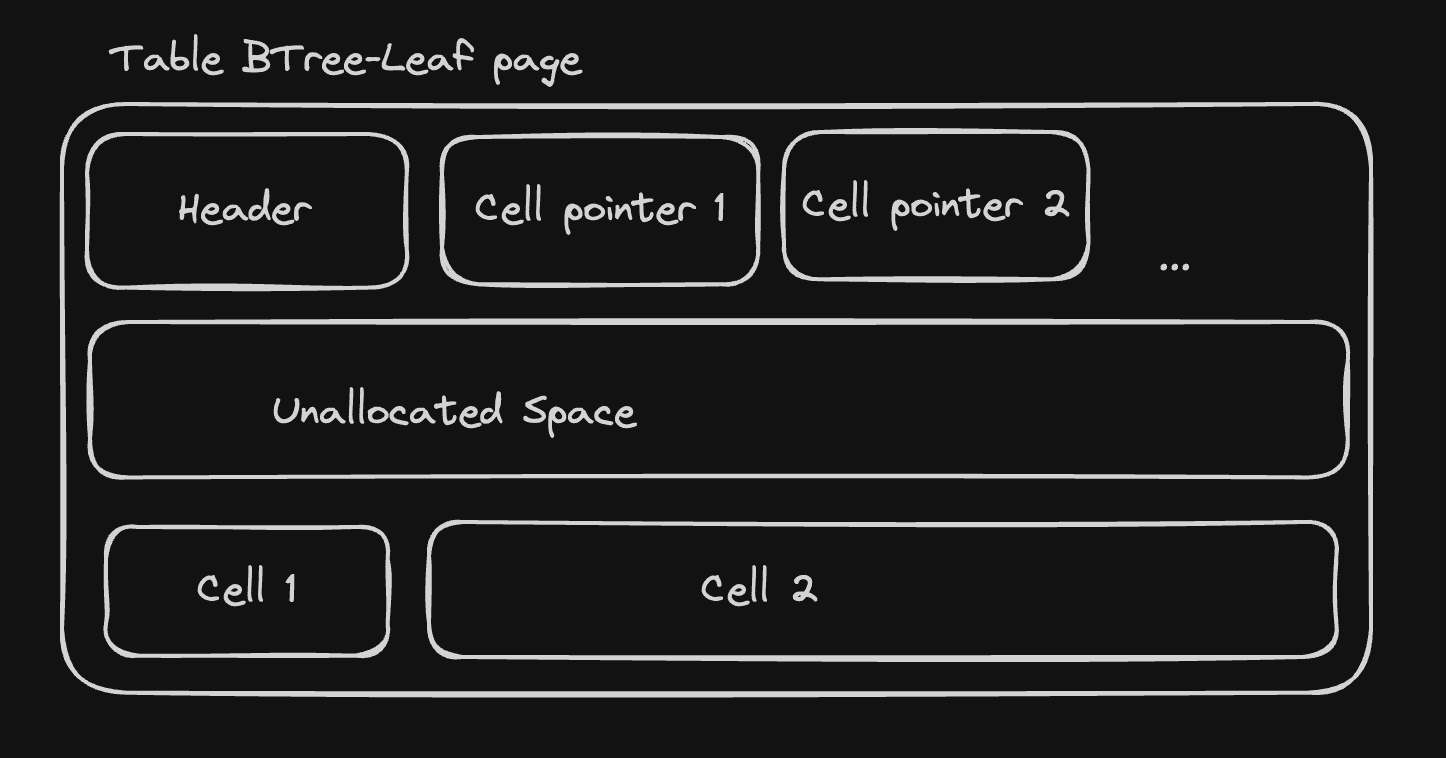

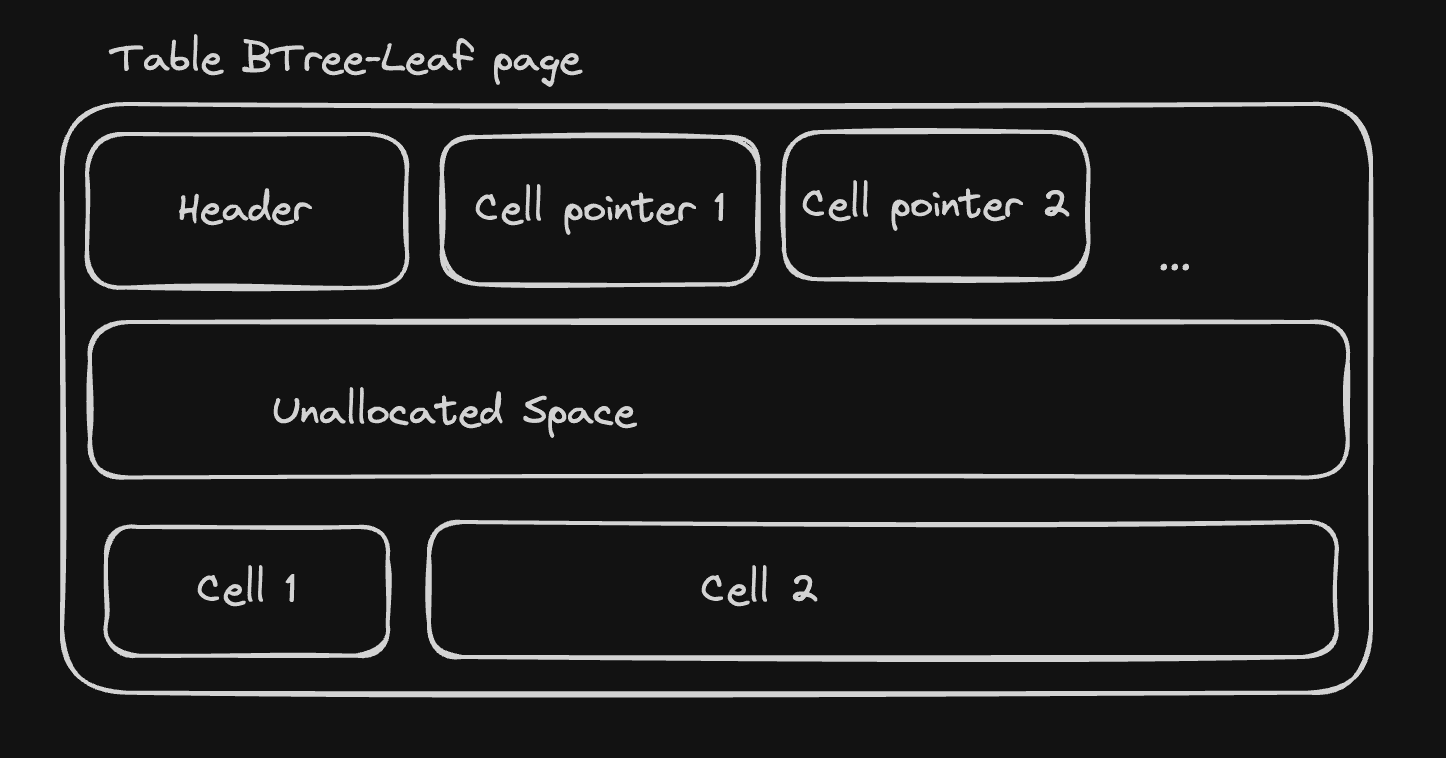

What will our IR look like? On one end of the spectrum, we could design a very high-level IR that closely resembles the source language, and on the other we could design a low-level linear IR that mimics the assembly code. We'll go for a hybrid graph-based approach, where branchless sections of the code are represented as linear sequences of low-level instructions -which we'll call "basic blocks"- and conditional jumps are represented as edges between the basic blocks. This structure is called a control-flow graph (CFG).

As an example, let's take the following Pylite program, which computes nth value of the Fibonacci sequence:

def fib(n: int) -> int:

a = 1

b = 1

while n > 0:

c = b

b = a + b

a = c

n = n - 1

return a

The corresponding CFG is shown below:

┌───────┐

│ start │

└───┬───┘

│

▼

┌───────┐

│ a = 1 │

│ b = 1 │

└───┬───┘

│

▼

┌───────┐ False ┌──────────┐

┌──────▶│ n > 0 │────────▶│ return a │

| └───┬───┘ └──────────┘

| │ True

| ▼

| ┌───────────────┐

| │ c = b │

| │ b = a + b │

| │ a = c │

| │ n = n - 1 │

| └────┬──────────┘

| │

└─────────┘

You'll notice that control-flow constructs -such as the while in our function definition-

are absent from the basic blocks. This is because they are represented as edges between the basic blocks.

For now our CFGs will be much simpler, as our function bodies will only contain a single return statement.

Let's start by defining the IR nodes:

//! compiler/src/ir/nodes.rs

#[derive(Debug, Clone)]

pub enum Decl {

Function(Function),

}

#[derive(Debug, Clone)]

pub struct Function {

pub name: String,

pub cfg: Cfg,

}

#[derive(Debug, Clone)]

pub struct Cfg {

pub blocks: Vec<BasicBlock>,

}

#[derive(Debug, Clone)]

pub struct BasicBlock {

pub instructions: Vec<Instruction>,

}

#[derive(Debug, Clone)]

pub enum Instruction {

Return(Operand),

}

#[derive(Debug, Clone)]

pub enum Operand {

Immediate(i64),

}

Here is the IR representation of our simple Pylite main function:

Function(

Function {

name: "main",

cfg: Cfg {

blocks: [

BasicBlock {

instructions: [

Return(

Immediate(

1,

),

),

],

},

],

},

},

),

At this stage, there is a direct corespondance between the AST nodes and their IR

counterparts. Let's build a Generator struct to perform the mapping between

the two representations. It's only public method generate will translate a single

AST Decl into the matching IR construct.

use crate::ast;

use super::nodes::{BasicBlock, Cfg, Decl, Function, Instruction, Operand};

pub struct Generator {

instructions: Vec<Instruction>,

}

impl Generator {

pub fn new() -> Self {

Self {

instructions: Vec::new(),

}

}

pub fn generate(mut self, decl: &ast::Decl) -> Decl {

match &decl.kind {

ast::DeclKind::Function(f) => {

self.generate_function(f);

Decl::Function(Function {

name: f.name.name.clone(),

cfg: Cfg {

blocks: vec![BasicBlock {

instructions: self.instructions,

}],

},

})

}

}

}

fn generate_function(&mut self, function: &ast::FunDecl) {

for stmt in &function.body {

self.generate_stmt(stmt);

}

}

fn generate_stmt(&mut self, stmt: &ast::BlockStatement) {

match &stmt.kind {

ast::BlockStatementKind::Return { value } => {

let operand = self.generate_expr(value);

self.instructions.push(Instruction::Return(operand))

}

}

}

fn generate_expr(&mut self, stmt: &ast::Expr) -> Operand {

match &stmt.kind {

ast::ExprKind::IntLit { value } => Operand::Immediate(*value),

}

}

}

Assembly language 101

At the end of the compilation pipeline, our compiler will output assembly code. Assembly code is a textual representation of the machine code that's executed by the CPU. Therefore, assembly code is specific to a given CPU architexture, and the assembly code generated by our compiler will only run natively on x86 CPUs (which is a prevalent architecture in both personal computers and servers).

By the end of this section, you'll know enough about assembly programming to

understand each line of the assembly equivalent of our Pylite main function.

Your first assembly program

.globl main ; on macOs replace with .globl _main

main: ; on macOs replace with _main:

mov rax, 1

ret

This is the assembly equivalent of our Pylite main function.

In assembly, each line represents a single instruction or assembler directive,

and instructions are executed sequentially, from top to bottom. Let's break this

down line by line:

.globl main

This line is an assembler directive that declares the main label as a global symbol.

By default, symbols are local to the source file they are defined in, so referencing

main from another source file would result in an error.

In this case, there is only one source file, so why do we need to declare main as a global

symbol? Well,main is the actual entry point of our program: the entry point is

the _start symbol, defined in an object file named crt0.o (C runtime zero).

crt0 is part of the C standard library, and it is responsible for setting up the

execution environment of our program before invoking our main function.

main:

This is the definition of the main label. Unlike mainstream programming languages,

assembly does not have functions or procedures, but instead uses labels to mark

locations in the code that can be used as targets for jumps and calls.

We'll make extensive use of labels when implementing control flow structures

and function calls in our compiler.

On macOS, the label must be prefixed with an underscore, so it would be

_main:instead ofmain:.

mov rax, 1

This instruction moves the immediate value 1 into the rax register.

Registers are small, fast and fixed-size storage locations within the CPU that are used to hold

values that are being processed. There is a fixed set of registers, some of which

are "general-purpose" registers, meaning that they can be used for almost any operation,

while others are specialized and have very specific behaviors.

rax is one of the general-purpose registers, and like most registers in a 64-bit CPU architecture,

it can hold 64 bits of data. There are 15 other general-purpose registers, named

rbx, rcx, rdx, rsi, rdi, rsp, rbp, and r8 to r15.

Don't feel compelled to memorize the full list right now, as we'll discuss a

lot more about registers in the next chapters.

ret

Through a mechanism that we'll explore in greater depth in the next chapters, ret orders the CPU to

resume execution from the point where we jumped to the main label. In this case, it

will return to the code in crt0, which calls the exit system call with the value

in rax as the exit code of the program.

Building the executable

We'll use gcc to assemble and link our assembly code. The following sections

will show you how to set up your environment to assemble and run the assembly

code generated by our compiler.

macOS setup

On macOS, we'll use the homebrew package manager to install gcc. To install,

homebrew, follow the instructions on the official website.

You can check your installation by running the following command in your terminal:

$ brew --version

Once homebrew is installed, you can install gcc by running:

$ brew install gcc

If your computer is running an x86 CPU, you're all set! But if you have an Apple Silicon

CPU, there is one last thing to be aware of: while it is perfectly fine to run the

compiler on an Apple Silicon CPU, the assembly code generated by our compiler

will only run on x86 CPUs. To run the generated assembly code, you'll need to

use Rosetta 2, which is a translation layer that allows x86 code to run on Apple Silicon CPUs.

You can install Rosetta by running the following command in your terminal:

$ softwareupdate --install-rosetta

Once Rosetta 2 is installed, you can run gcc as an x86 binary by first opening

an x86 shell and running gcc from there:

$ arch -x86_64 zsh

$ <your_gcc_invocation>

Linux setup

On Linux, you can install gcc using your distribution's package manager.

For example, on Ubuntu, you can run the following command:

$ sudo apt install gcc

You can check your installation by running the following command:

$ gcc --version

Windows setup

Our compiler will not run natively on Windows, but you can use the Windows Subsystem for

Linux (WSL) to run it. WSL allows you to run a Linux distribution on Windows, so you can

use the same instructions as for Linux to install gcc and run the compiler.

To install WSL, follow the instructions on the official website.

Assembling and linking the assembly code

For this section, we'll assume that our assembly code is saved in a file named return_code.s.

We can assemble and link the assembly code with a single gcc command:

$ gcc -masm=intel return_code.s -o return_code

It instructs gcc to assemble and link the code in return_code.s, and produces an

executable named return_code. The -masm=intel flag tells gcc to use the Intel syntax

for the assembly code, which is the syntax used in our compiler. By default, gcc uses the

AT&T syntax, which is slightly different.

We finally have a working executable! You can run it by executing the following command:

$ ./return_code

And predictably... nothing happens. Our program runs and exits, but is does not print anything to the console. To verify that it worked properly, we can inspect the exit code of the previous command by running:

$ echo $?

1

It prints 1, which is the value we returned from the main function.

You can try to substitute 1 with any other small integer in the assembly code,

rerun gcc, and run the executable again to see the exit code change accordingly.

Code generation

We're ready to build the final piece of our compiler: the code generator. It will take the IR representation of our program as input, and generate the corresponding x86 assembly code. This will be a two-step process: first, we'll translate the IR data structure into another data structure representing the linear sequence of assembly instructions, then we'll emit the actual textual assembly code.

As we'll discover in the next chapters, splitting this process into two steps will allow us to perform some transformations at the assembly level before emitting the final assembly code.

The final assembly code for our Pylite main function will look like this:

.globl main

main:

mov rax, 1

ret

We'll define a few rust types to represent assembly instructions and operands.

//! compiler/src/codegen/asm.rs

#[derive(Debug, Clone)]

pub struct Function {

pub label: String,

pub instructions: Vec<Instruction>,

}

#[derive(Debug, Clone)]

pub enum Instruction {

Mov { src: Operand, dst: Operand },

Ret,

}

#[derive(Debug, Clone)]

pub enum Operand {

Register(Register),

Immediate(i64),

}

#[derive(Debug, Copy, Clone)]

pub enum Register {

Rax,

}

impl std::fmt::Display for Register {

fn fmt(&self, f: &mut std::fmt::Formatter<'_>) -> std::fmt::Result {

match self {

Register::Rax => write!(f, "rax"),

}

}

}

To translate the IR into assembly instructions, we need to iterate over each

basic block of a function's CFG and generate the corresponding assembly Instruction.

To that end, we'll define a FnGenerator struct that wraps a function's IR representation

and a mutable assembly instructions buffer and iterates over the basic block's instructions,

pushing the corresponding assembly instructions into the buffer.

We'll also create a utility function that instantiates a FnGenerator for every function

in the IR program and returns a Vec of assemblyFunction structs, each containing the

function's label and the corresponding assembly instructions.

use crate::ir;

use super::asm::{Function, Instruction, Operand, Register};

struct FnGenerator<'f> {

function: &'f ir::Function,

instructions: Vec<Instruction>,

}

impl<'f> FnGenerator<'f> {

fn new(function: &'f ir::Function) -> Self {

Self {

function,

instructions: Vec::new(),

}

}

fn generate(mut self) -> Vec<Instruction> {

for block in &self.function.cfg.blocks {

self.generate_block(block);

}

self.instructions

}

fn generate_block(&mut self, block: &ir::BasicBlock) {

for instruction in &block.instructions {

match &instruction {

ir::Instruction::Return(op) => {

let operand = self.generate_operand(op);

self.push(Instruction::Mov {

src: operand,

dst: Operand::Register(Register::Rax),

});

self.push(Instruction::Ret)

}

}

}

}

fn generate_operand(&mut self, operand: &ir::Operand) -> Operand {

match operand {

ir::Operand::Immediate(value) => Operand::Immediate(*value),

}

}

fn push(&mut self, instruction: Instruction) {

self.instructions.push(instruction);

}

}

pub fn generate(program: &[ir::Decl]) -> Vec<Function> {

program

.iter()

.map(|decl| {

let ir::Decl::Function(f) = decl;

Function {

label: f.name.clone(),

instructions: FnGenerator::new(f).generate(),

}

})

.collect()

}

The final step before we can assemble and run our program is to render the assembly code into its textual representation.

One thing to note is that the format of labels is inconsistent across platforms: on macOS function labels must start with an underscore, which is not the case on Linux. To make our code portable, we'll use conditional compilation, and define two different implementations of the function that renders function labels: one that prepends an underscore to the label on macOS, and an other that returns the label as is on Linux.

//! compiler/src/codegen/render.rs

#[cfg(target_os = "macos")]

fn format_fun_label(label: &str) -> String {

format!("_{}", label)

}

#[cfg(target_os = "linux")]

fn format_fun_label(label: &str) -> String {

label.to_string()

}

With this in place, we can implement our render_program function, which takes a slice

of assembly Function structs and returns the textual representation of the assembly code.

//! compiler/src/codegen/render.rs

use super::asm::{Function, Instruction, Operand};

pub fn render_program(program: &[Function]) -> String {

let mut code = String::new();

for function in program {

code.push_str(&render_function(function));

}

code

}

fn render_function(function: &Function) -> String {

let label = format_fun_label(&function.label);

let mut code = format!("\t.globl {label}\n{label}:\n");

for instruction in &function.instructions {

let rendered = render_instruction(instruction);

code.push_str(&format!("\t{rendered}\n"));

}

code

}

fn render_instruction(instruction: &Instruction) -> String {

match instruction {

Instruction::Mov { src, dst } => {

format!("mov {}, {}", render_operand(dst), render_operand(src))

}

Instruction::Ret => "ret".to_string(),

}

}

fn render_operand(operand: &Operand) -> String {

match operand {

Operand::Register(register) => register.to_string(),

Operand::Immediate(i) => i.to_string(),

}

}

// [...]

Putting it all together

At this stage, we have all the components of a complete compiler.

Let's put it to the test by writing a straightforward main function that

binds all of these components to turn our Pylite code into an assembly program:

//! compiler/src/main.rs

mod ast;

mod codegen;

mod ctx;

mod db;

mod error;

mod id;

mod ir;

mod parse;

use id::UniqueIdGenerator;

fn main() {

// Read the input source code

let input_file = std::env::args().nth(1).expect("missing input file");

let source_code = std::fs::read_to_string(&input_file).expect("failed to read input file");

let id_gen = UniqueIdGenerator::default();

// Parse the code into an Abstract Syntax Tree

let ast_statements = parse::parser::Parser::new(id_gen.clone(), &source_code)

.parse_module()

.expect("failed to parse module");

// Lower the AST to the Intermediate Representation

let ir_statements = ast_statements

.iter()

.map(|stmt| {

let ast::StatementKind::Decl(decl) = &stmt.kind;

ir::gen::Generator::new().generate(decl)

})

.collect::<Vec<_>>();

// Generate and print the assembly code

let asm = codegen::gen::generate(&ir_statements);

println!("{}", codegen::render::render_program(&asm));

}

With our input program in res/samples/return_const/main.py, we can run our compiler and inspect it's output

using the following commands:

$ cargo run -- res/samples/return_const/main.py > out.s

$ cat out.s

.globl _main

_main:

mov rax, 1

ret

It seems that the compilation was successful! We can execute the program and observe its output code by running the following commands:

$ gcc -masm=intel out.s -o main

$ ./main

$ echo $?

1

If you are using a mac with an Apple Silicon processor, remember to run

arch -x86_64 zshbefore typing these commands.

We just ran our first Pylite program!

In the next section, we'll start laying the foundations for a more robust

architecture.